The Grassi Box is a MIDI-to-relay piece of hardware (built by Dan Wilson of Circitfied) based on an Arduino teensy and some accompanying software tools built in Max. The idea is to be able to control a variety of my ciat-lonbarde instruments from a computer.

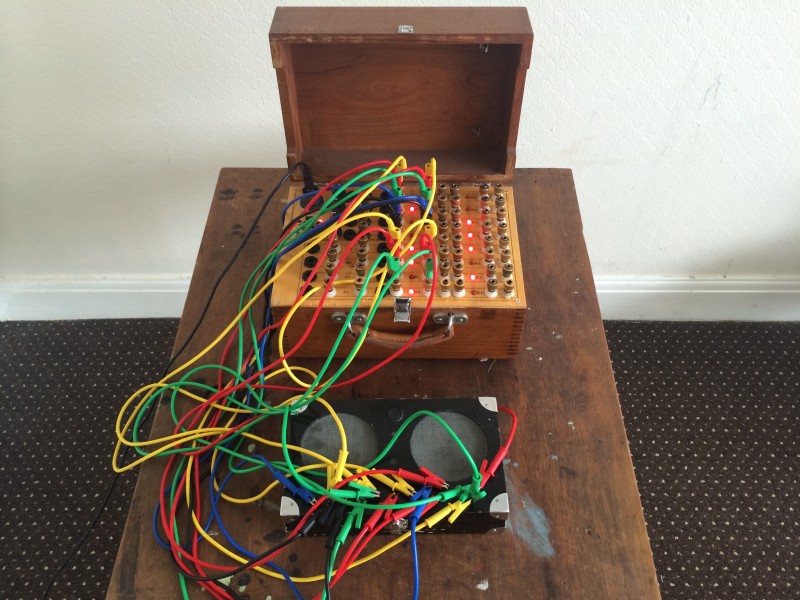

Firstly, it looks and sounds like this:

The two initial methods of control I’ve built are ‘random patch from attack detection’ (as seen in the video above), and an analysis-based re-synthesis similar to my C-C-Combine Max patch (as seen in the video below), but using hardware synthesis/sounds instead of a body(corpus) of samples as would normally be used in concatenative synthesis. I decided on building these two methods of control, as well as an assortment of other software tools, into a set of abstractions so that I can easily build more complex patches for the system without needing to code much from the ground up. These include a DMX control module (as seen in both videos), and other low level data handling components.

The reason I went with a Teensy instead of a regular Arduino was for its built in MIDI over USB functionality. You can do this with a regular Arduino, but you have to reflash the input chip every time you want to alter the code. Because of how the Teensy was designed you can still program it while in USB MIDI mode.

Click here to download the Teensy code.

The hardware around the Teensy, as built by Circitfied, takes the voltage from the Teensy digital pins and uses that to control transistors, which in turn turn on relays. There are 16 in total of these transistor/relay pairs. There are also some additional features in the box, including audio jacks for easy relay-based gating, passive filters, and exposed pins for using the NO (normally open) and NC (normally closed) pins of the relays.

Here is a video using audio analysis to resynthesize the incoming audio using ciat-lonbarde Fourses and Fyrall as the source instruments:

The analysis-based resynthesis works by, first, setting up a series of connections between the Grassi Box and the source instruments, then determining how many total and simultaneous combinations you want possible (16 total, with 5 simultaneous, in the above video). After that, the patch is run, which analyzes every possible combination/permutation of audio descriptors (loudness, pitch, spectral centroid, and roughness). It took about 15 minutes to analyze the 6884 combinations/permutations needed for the video using a 100ms analysis window. Once the combinations/permutations are analyzed, a different audio input is fed into the matching engine (in this case, prepared/bowed guitar). This analyzes the incoming audio (in real-time) to find the nearest combination, given certain descriptor weights, and reconnects that patch. So essentially it’s creating dynamic hardware repatching based on the incoming audio.

I decided to approach the (computer) software side of things by creating a set of abstractions for Max, which I could then piece together quickly and easily, for any given patch I needed. There are two ‘heavy lifting’ abstractions, one dealing with onset-based repatching, and the other with analysis-based repatching, with the analysis-based one being the more complicated of the two. The core of that patch is a bit of javascript code which calculates all the combinations and permutations needed for any given setup. A close friend, Braxton Sherouse, helped with the core function in the javascript code.

Click here to download the g.box abstractions.

Click here to view schematic of one channel.

this is great, thank you for sharing all this.

rodrigo, this is very cool stuff, effective use of software/hardware interfacing. Since you’ve made the code available I wonder if you have any specs or schematic representations of the circuitry used in the box itself. I have a couple teensy boards lying around in wait for a project and this looks like something I could build and use in concert with an upright bass for triggering changes.

I don’t have a schematic, but I can ask the guy who built it for me. It’s not a terribly complicated circuit, I just don’t have the stomach for that kind of building/troubleshooting.